A couple of notes on where I am with my ESXi to Proxmox move.

What I have done:

- I finally bit the bullet and deleted all of my iSCSI LUNs for ESXi – they were simply wasting space at this point.

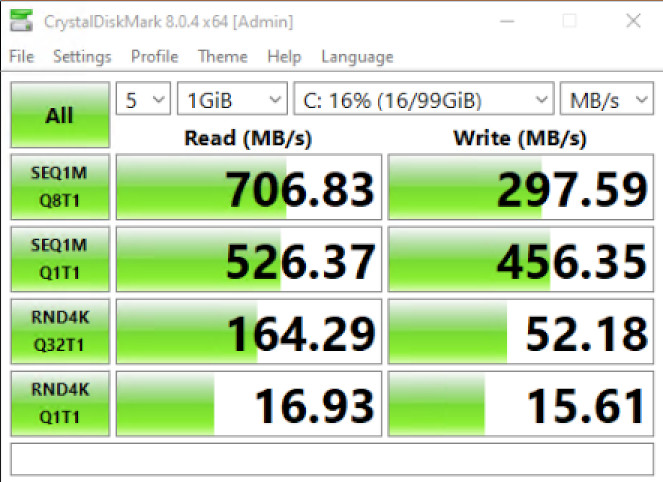

- I also moved from iSCSI to NFS for my Proxmox VMs. The main difference between iSCSI and NFS is that iSCSI shares data on the block level, and NFS shares data on the file level. Performance is almost the same, but, in some situations, iSCSI can provide better results. For my purposes NFS is fine.

- And NFS has the benefit that I can access the VMs on the NAS in Synology File Station. There is a level of comfort in being able to “see” all of my VMs without having to set up an iSCSI initiator.

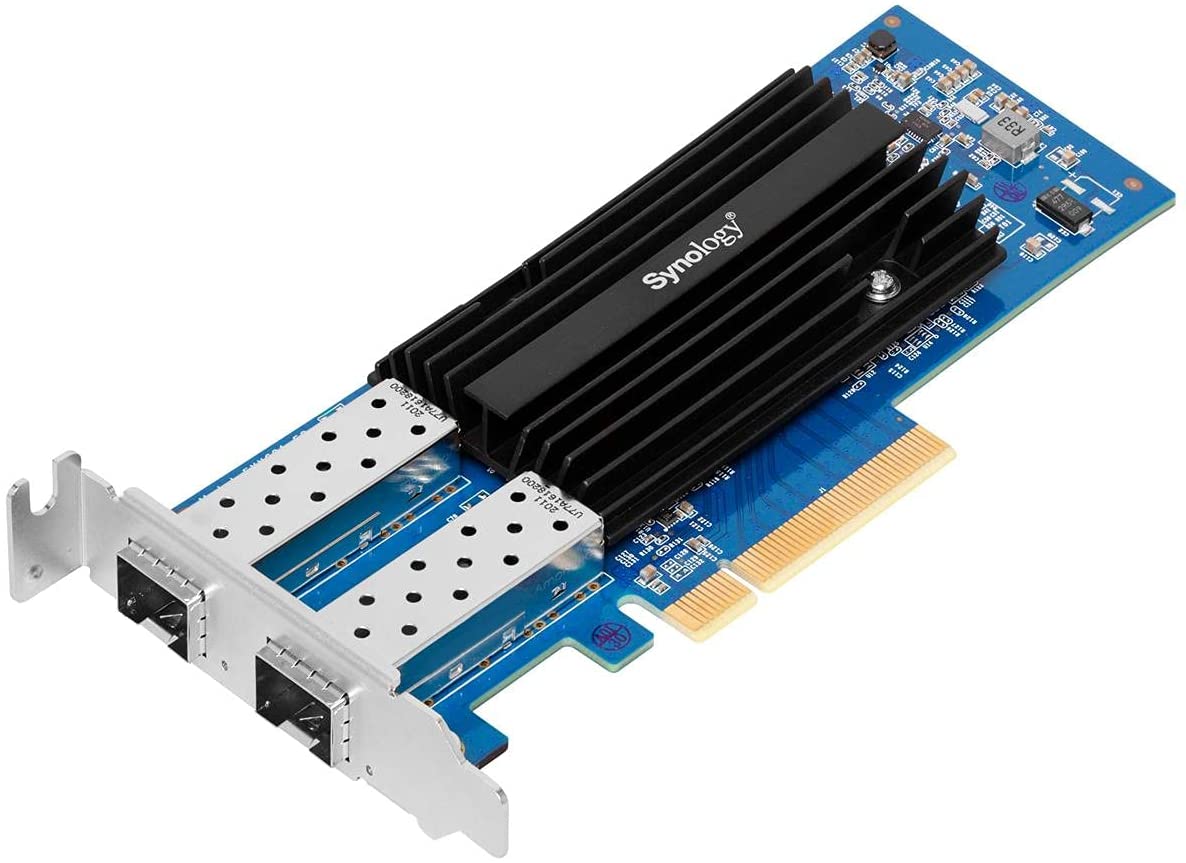

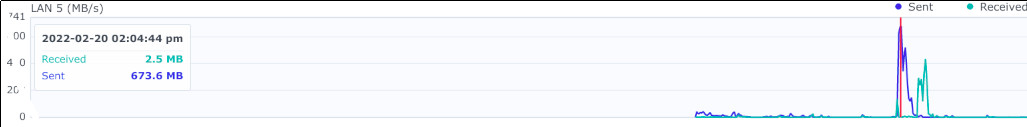

- I haven’t worked on network bonding for the NFS 10 GbE connections. Frankly, I do not think I will see any benefits and “added complexity equals added problems.”

- Both my “production” (PROD) Proxmox server (DL380 G9) and my “development” (DEV) Proxmox server (DL360 G8) can access the same NFS shares. That means that my ISOs are shared as well as my Proxmox backups. This also means that I can create a VM on the DEV box and, by backing up the VM to the shared backup location, restore it to the PROD box. While not the smoothest method, it is a rather neat workaround. I do have to be careful about starting any VMs that are visible to both servers, otherwise “bad things” would happen.

- I have moved a number of full VMs to LXC containers. Things like my reverse proxy, a DNS server, Home Assistant (I don’t really have any devices for this yet but with Z-Wave it will give me something to do 🙂 ), and Pi-Hole. There is really no need to have a full blown VM for these simple services. Proxmox makes it easy.

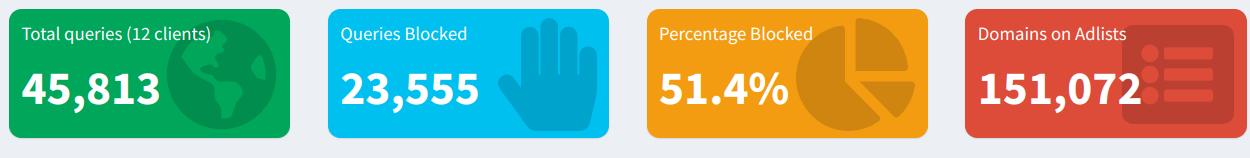

- Pi-Hole – not part of Proxmox (other that it runs in an LXC container) but I finally got around to setting up Pi-Hole. A couple of additional configs were needed as Pi-Hole sits on one subnet while my wired and wireless devices sit on other subnets to get it working for my clients. And, WOW!, the amount of ads, etc. that are blocked are crazy. Here are the last 24 hour stats – 51.4% blocked requests?!?!?!

The To-Do (or think-about) list:

- Adding a Unifi Link Aggregation switch – while both Proxmox nodes are connected directly to the Synology NAS, they cannot connect directly to each other for storage. One node is using the 172.16.10.0/24 subnet and the other 172.168.20.0/24 with one of the two10 GbE port of the NAS assigned to each. With the switch I can have them both on the same subnet (yes, I know that I could have “broken” the 172.16.10/0 subnet in two using /25).

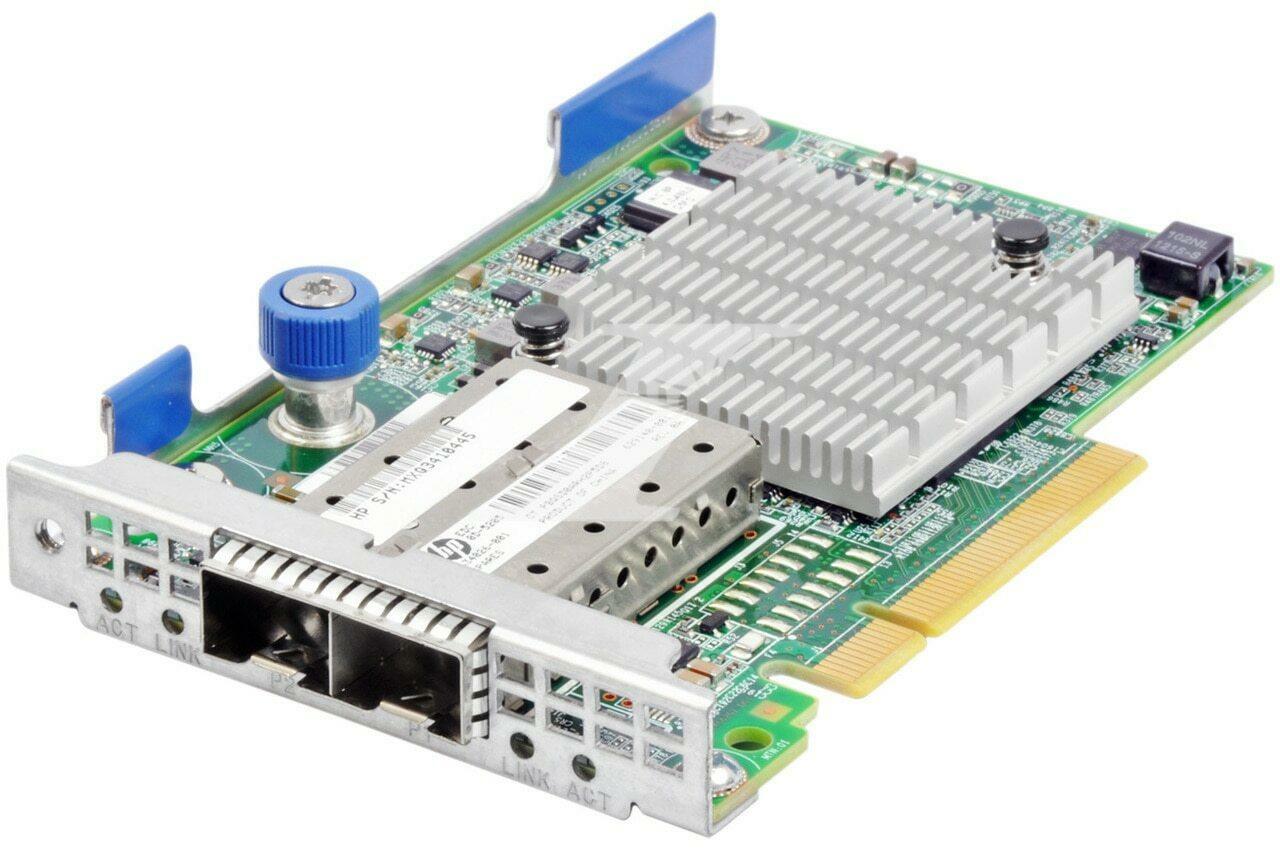

- Adding a “one litre” PC (such as a HP EliteDesk 705 G3) with Proxmox installed and setting up the DL360 and DL380 as a cluster using the 705 to complete the quorum. Allowing the 705 (or whatever) access to storage may be beneficial, but the 705 does not have 10 GbE. I *think* that the main nodes need to have storage on the same subnet to work correctly (I haven’t read up on that, yet). The jury is still out on that given the cost of electricity, heat (not so bad in winter) and noise (the rack sits behind me in my office).